Documentation

Technical reference for the MocapX Maya plug-in

Requirements

Supported Maya Versions

Plug-in Version 2.2.2

- Maya 2026

- Maya 2025

- Maya 2024

Compatible iOS Devices

Requires iOS / iPadOS 15.0 or later

Operating Systems

- Windows 10 / 11

- macOS 10.14 Mojave and newer

- iOS 15.0 or later

Installation

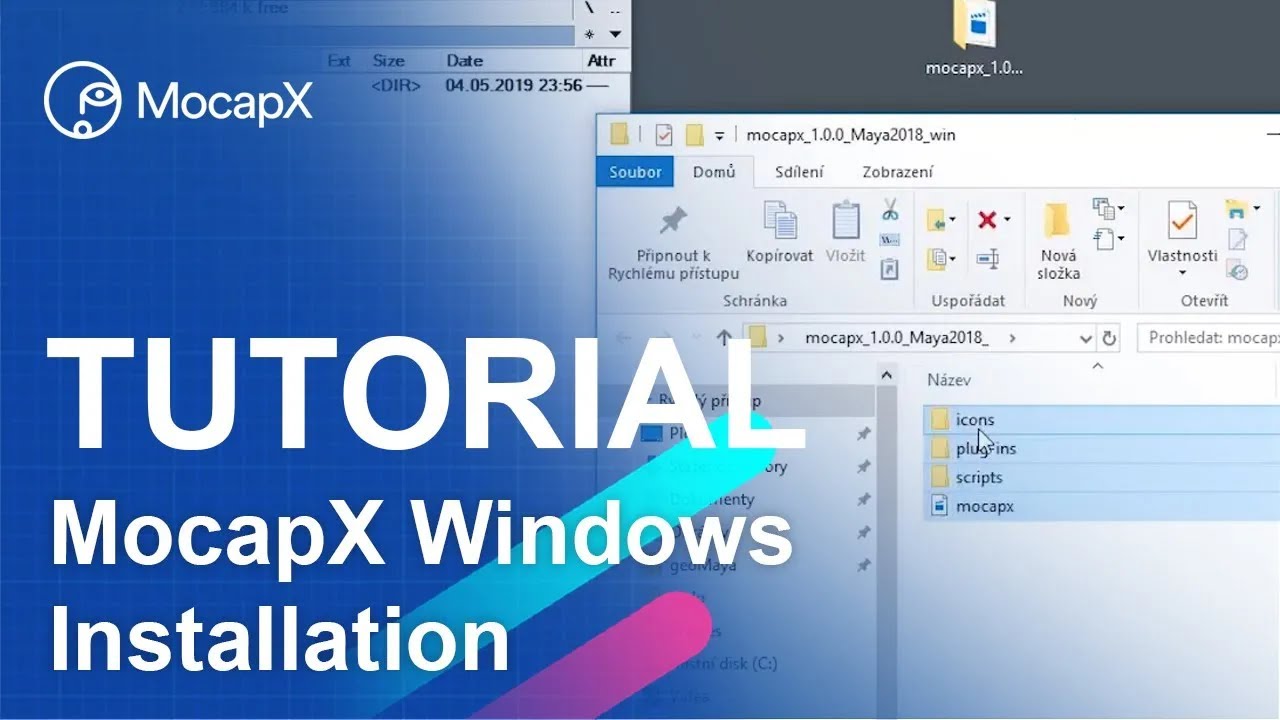

Windows Installation

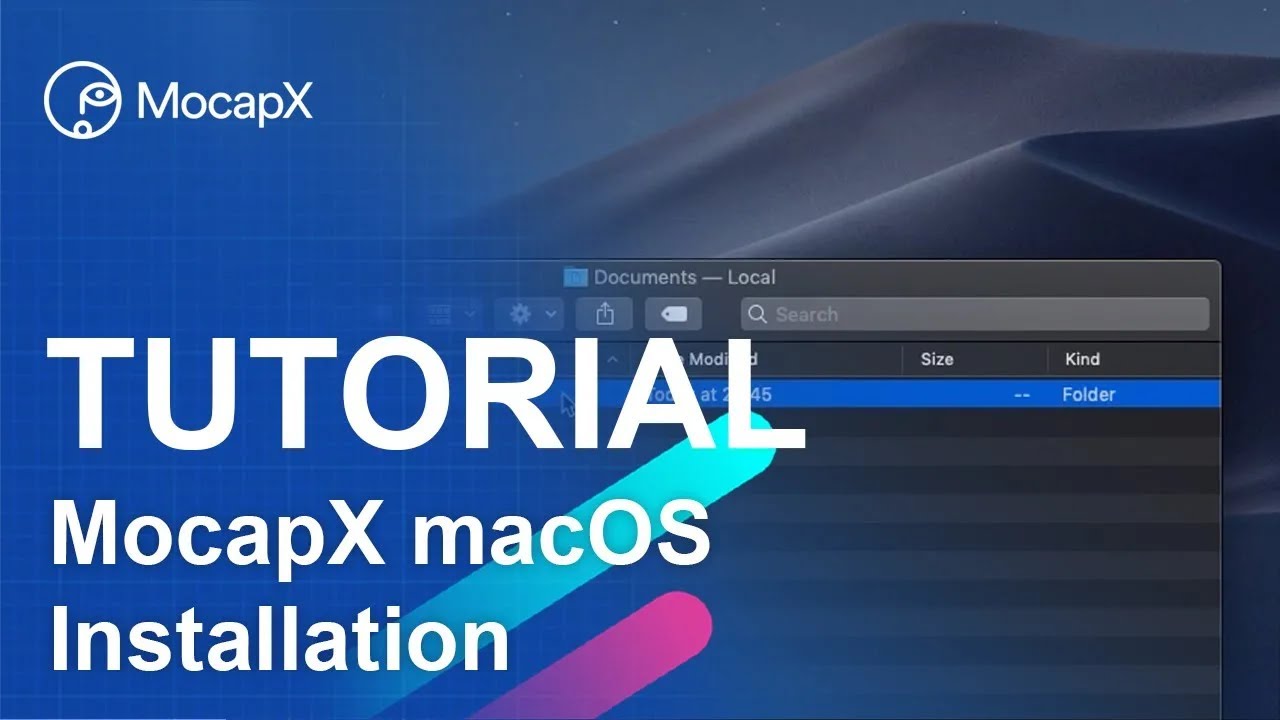

macOS Installation

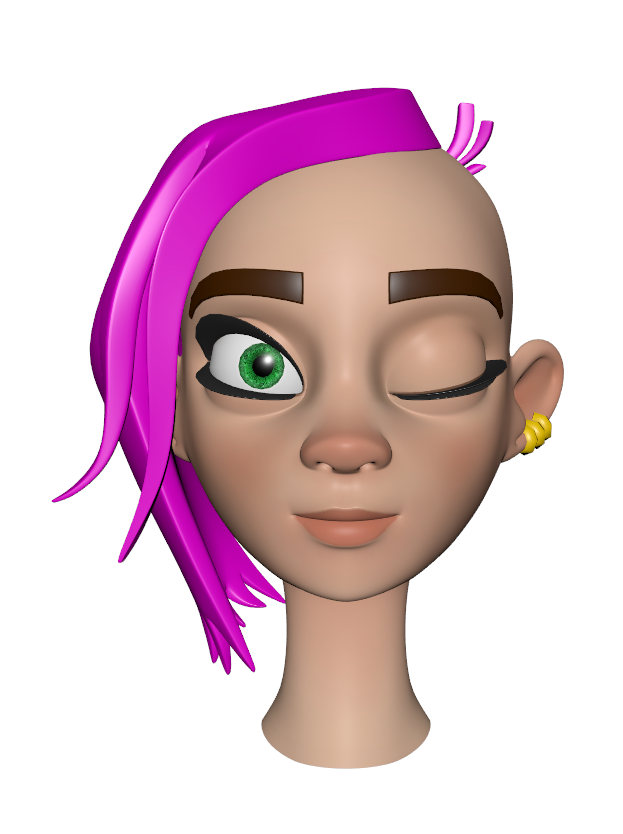

Facial Expression Reference

MocapX captures 52 ARKit-compatible facial expressions. Select an expression below to compare the neutral face with the activated pose.

Neutral

Neutral eyeBlink_L

eyeBlink_LShelf Tools

After loading the plug-in, the MocapX shelf appears in Maya with the following tools:

PoseLib Editor

Create and manage pose presets that map captured expressions to your rig's controllers.

Attribute Collection

Group and organize the attributes that receive motion capture data.

Create PoseLib

Create a new Pose Library node to store expression-to-rig mappings.

Create Pose

Add a new pose to the active Pose Library, capturing current controller values.

Create Realtime Device

Create a new Realtime Device node for receiving live data from the iOS app.

Create Clip Reader

Create a new Clip Reader node for playing back recorded motion capture clips.

Live

Start live data streaming from the connected iOS device to Maya.

Pause

Pause the live data stream without disconnecting from the device.

Bake Tool

Bake captured motion data onto your rig's animation curves.

Demo Rig

Load a pre-configured demo rig to test MocapX immediately.

Help

Open MocapX documentation and support resources.

Key Components

Realtime Device Node

Clip Reader

Attribute Collection

PoseLib Editor

Adaptor Node

Basic Tutorials

01

01Getting Started

Learn how to quickly setup MocapX and capture your first animation.

02

02Pose Library

Learn how to work with pose library and manage pose presets.

03

03Basic Connection

Connect your iOS device to Maya over WiFi or USB.

Advanced Tutorials

04

04Advanced Skeleton

Using Advanced Skeleton with MocapX for facial animation.

05

05Eye and Head Connection

Learn how to connect eye tracking and head rotation data to your character.

06

062D Motion Capture

Learn how to use MocapX for 2D animation motion capture.

07

07Camera Tracking & Local Recordings

Learn how to use camera tracking and save recordings locally.

08

08Camera Tracking & Streaming

Learn how to use camera tracking with live streaming to Maya.

09

09Motion Capture with iPhone

Complete walkthrough of motion capture in Maya using the MocapX app.